Scrapingdog has actually the added advantage of a LinkedIn API, besides accessible handling browsers, proxies, and also CAPTCHAs. Strategies start at $299. a month as well as can offer technology firms as well as developers that require effective internet scuffing tools. AvesAPI provides targeted organized information junking from Google Browse as well as targets agencies as well as programmers. AvesAPI is ideal for search engine optimization because it utilizes a dispersed system as well as has the potential to extract countless search phrases fast. Additionally, this tool may be useful to advertising experts.

AutoScraper Tutorial - A Python Tool For Automating Web Scraping - Analytics India Magazine

AutoScraper Tutorial - A Python Tool For Automating Web Scraping.

Posted: Tue, 08 Sep 2020 07:00:00 GMT [source]

DOM parsing enables you to parse HTML or XML papers right into their equivalent Paper Object Model depiction. DOM Parser belongs to the W3C standard that offers methods to navigate the DOM tree as well as essence preferred details from it, such as message or features. Re is imported in order to utilize regex to match the customer input keyword. Pandas will be used to write our key words, the matches Benefits of API integration services discovered, as well as the variety of occurrences into an excel data. The startup currently has 18 employees with plans to expand swiftly, maybe reaching 50 or more within a year if points continue along at the current pace.

Maybe simply done by including Thread.Sleep, after the thread proceeds as well as locates the switch. As opposed to hardcoding the worth of wait time, this can be accomplished in a more vibrant way. Rather than defining the entire course for CSS selector, define a string check for a class to begin with btn.

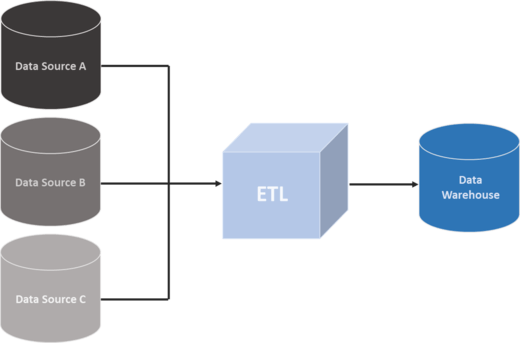

The particular website location that ends with.html is the course to the job summary's distinct source. The method as well as tools you require to collect details utilizing APIs are outside the scope of this tutorial. To learn more regarding it, take a look at API Assimilation in Python.

Selenium Python Tutorial A detailed Selenium Python Tutorial to run your first automation tests in Selenium and also Python us ... Use BeautifulSoup to parse the HTML scraped from the website. Before recognizing the method to do Web Scuffing using Selenium Python and also Beautiful, it is necessary to have all the prerequisites all set in place. Automate is an intuitive IT automation system created to assist organizations of any kind of dimension boost performance and also take full advantage of ROI throughout the organization.

Using Methods To Extract Data From The Internet

What's even more, Naghshineh reports that ARR has expanded 20x year-over-year, and the business ended up being cash-flow positive six months ago, a laudable landmark for such a young organization. It has likewise handled to be incredibly capital-efficient with Naghshineh coverage that he has spent just half of the $400,000 in pre-seed money his business received. Kevin Sahin Kevin operated in the internet scraping sector for ten years before co-founding ScrapingBee. BS4 is a great selection if you chose to opt for Python for your scrape but do not wish to be restricted by any framework needs. Scrapy most definitely is for an audience with a Python history. While it functions as structure as well as manages lots of the scuffing by itself, it still is not an out-of-the-box solution however requires adequate experience in Python.

- Web browser show pages let users quickly navigate different sites and parse information.

- Other choices consist of keeping the details in a data source or transforming it into a JSON apply for an API.

- Parsehub makes use of maker learning to analyze the most complicated websites and also generates the result documents in JSON, CSV, Google Sheets, or with API.

- Although the browser implements JavaScript by itself and you do not need a manuscript engine to run it yourself, it can still posture an issue.

- As opposed to printing out all the tasks detailed on the internet site, you'll first filter them making use of key phrases.

If you're interested, after that you can read more concerning the distinction between the DOM as well as HTML on CSS-TRICKS. Throughout the tutorial, you'll additionally experience a few workout blocks. You can click to expand them as well as test yourself by finishing the tasks defined there. Simply a couple of clicks needed to have a chatbot up and also running on the Apify cloud at a reasonable rate and also with 24/7 assistance.

Instance: Web Scuffing With Lovely Soup

It enables you to scratch websites straight from your web browser, without the requirement to in your area set up any devices or or create scratching manuscript code. The amazing quantity of information on the Internet is an abundant resource for any kind of area of research study or personal passion. To properly gather that information, you'll require to come to be proficient at web scratching.

Essential Of Web scraping: urllib & Requests With Python - Analytics India Magazine

Essential Of Web scraping: urllib & Requests With Python.

Posted: Wed, 09 Dec 2020 08:00:00 GMT [source]

If you're looking for a means to get public internet information frequently scuffed at API integration service Boost Your Business with Professional Web Scraping providers an established period, you've concerned the best location. This tutorial will show you how to automate your internet scratching processes using AutoScaper-- one of the numerous Python web scuffing collections available. Your CLI device can permit you to look for certain kinds of tasks or jobs specifically areas. Nevertheless, the demands collection features the integrated capacity to manage authentication. With these methods, you can visit to websites when making the HTTP demand from your Python manuscript and then scuff details that's concealed behind a login.

So, the process involves taking something from a page as well as repurposing it for another usage. This information can be in the form of message, photos, or various other components. Did you take into consideration including the Norconex HTTP Collection agency to this checklist? It is simple to run, simple for developers to expand, cross-platform, effective and also well preserve. A full-service web scraping supplier is a far better as well as much more economical choice in such cases. Dramatist was produced to improve automated UI screening by removing flakiness, enhancing the rate of implementation, and also using understandings right into web browser operation.